|

2026-06 We are excited to release our new project, ABot-Earth 0.5! Explore the official project page to learn more about its capabilities. 2026-04 Sat3DGen is now open-sourced. We have released the full codebase on GitHub, the project page, and a Hugging Face demo. Feel free to try it out. 2026-03 I graduated with a PhD from Wuhan University. 2026-03 The paper Sat3DGen is accepted by ICLR 2026. 2026-02 The code of Sat2Density++ is released at GitHub. 2026-01 Sat2Density++ is accepted by T-PAMI. 2024-04 Two papers are accepted by CVPR 2024. 2023-07 Sat2Density is accepted by ICCV 2023. 2020-08 BGGAN is accepted by ECCVW 2020. 2020-07 We win Winner Award at the ECCV AIM 2020 Challenge on Rendering Realistic Bokeh with Congyu and Jiamin. |

|

I'm interested in 3D vision and image processing. Much of my research is about inferring the physical world and camera (shape, motion, color, light, bokeh, etc) from images. |

|

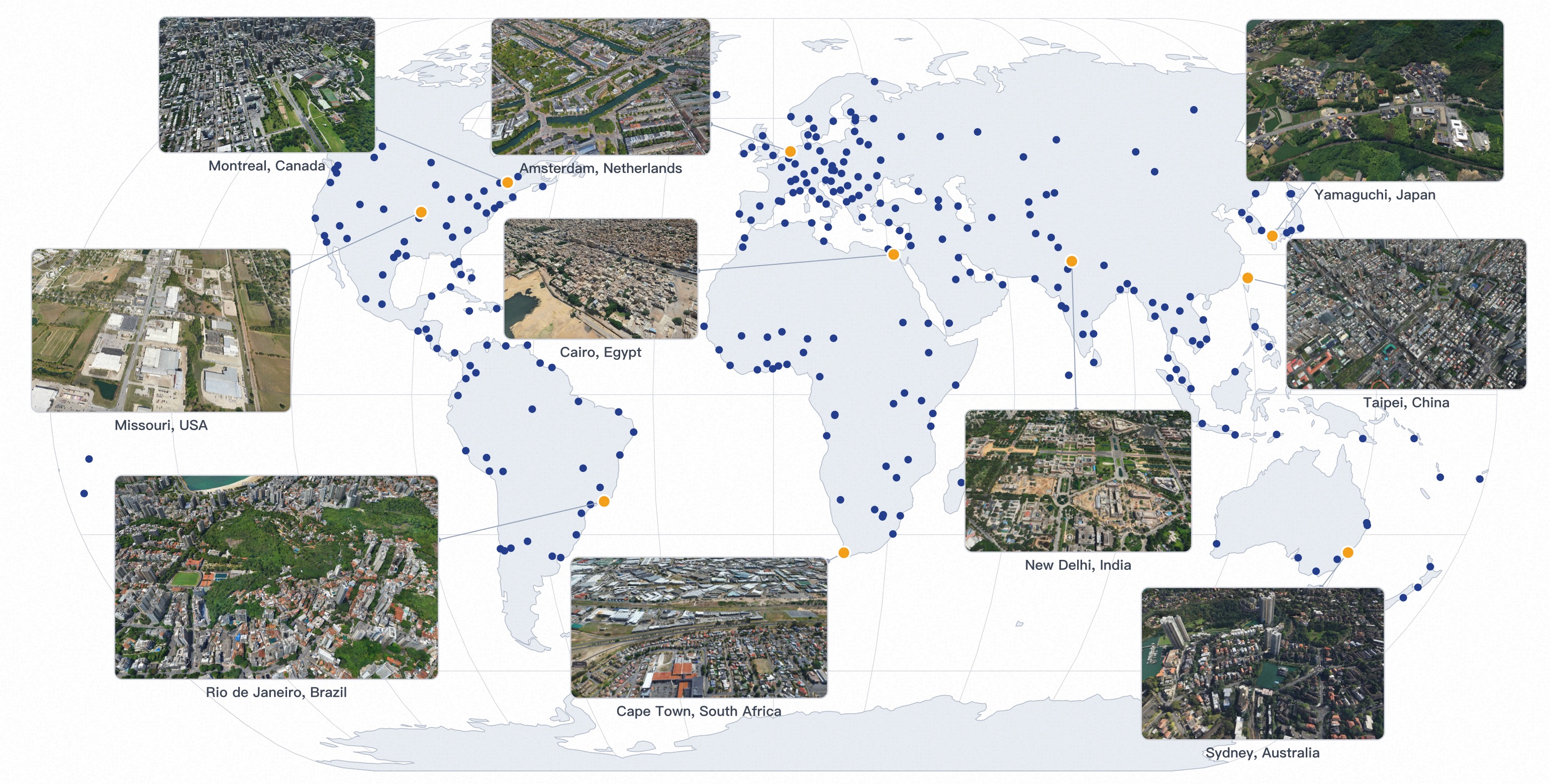

Ming Qian, Tianjian Ouyang, Mingchao Sun, Zijian Wang, Jincheng Xiong, Jiarong Han, Yongchang Zhang, Jiawei Zhang, Xu Wang, Yu Liu, Luyang Tang, Fei Yu, Zengye Ge, Mengmeng Du, Yuan Liu, Nianfei Fan, Song Wang, Yingliang Peng, Chunxue Jia, Yang Liu, Shiying Zeng, Haozhe Shi, Junnan Lai, Hongyu Pan, Zheng Wu, Mu Xu, Hang Zhang project page We present ABot-Earth 0.5, a generative 3D framework that synthesizes vast, seamless 3D environments from geospatially referenced satellite imagery. Built on a novel generative model formulated directly with 3D Gaussian Splatting (3DGS), it is trained on diverse real-world urban reconstructions to generate realistic geometry and textures. At inference, it synthesizes novel 3D scenes conditioned solely on satellite imagery at under 10 minutes per km², with integrated hierarchical LOD structures enabling real-time, interactive visualization on web-based map engines. Our official launch showcases an evolving 3DGS world spanning over 300 cities across 190+ countries, with continuous global expansion. |

|

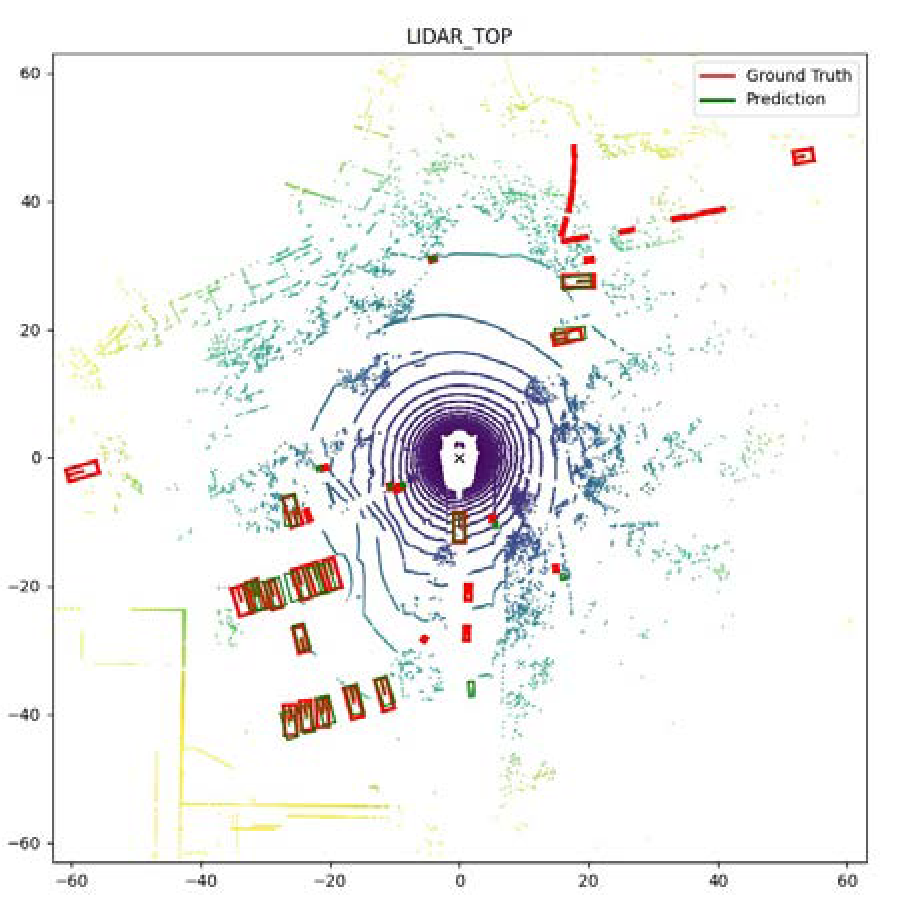

Ming Qian, Zimin Xia, Changkun Liu, Shuailei Ma, Wen Wang, Zeran Ke, Bin Tan, Hang Zhang, Gui-Song Xia ICLR, 2026 project page / paper / code / Hugging Face demo Sat3DGen is a feed-forward satellite-to-3D framework that learns a structured, view-consistent NeRF-style scene from 2D satellite/street-view supervision, enabling mesh export and large-area mesh generation, surround-view video rendering, semantic-map-to-3D synthesis, and single-image DSM estimation. The full codebase, model weights, and Hugging Face demo are now public. |

|

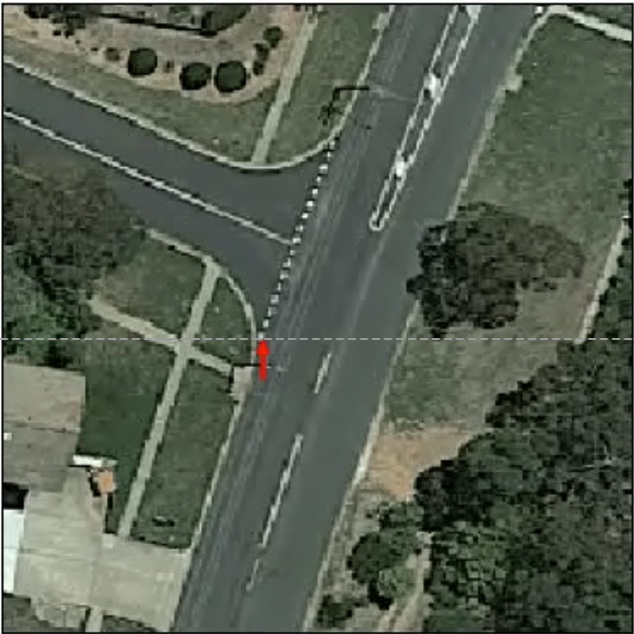

Ming Qian, Bin Tan, Qiuyu Wang, Xianwei Zheng, Hanjiang Xiong, Gui-Song Xia, Yujun Shen, Nan Xue T-PAMI, 2026 project page / arXiv / code The journal extension of Sat2Density, significantly improves the consistency and quality of generated street-view videos and images. |

|

Xianpeng Liu, Ce Zheng, Ming Qian, Nan Xue, Chen Chen, Zhebin Zhang, Chen Li, Tianfu Wu CVPR, 2024 arXiv |

|

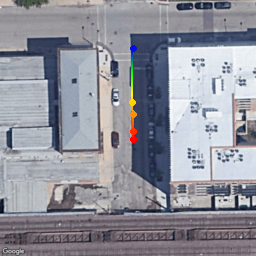

Ming Qian, Jincheng Xiong, Gui-Song Xia, Nan Xue, ICCV, 2023 project page / code / arXiv Sat2Density focuses on the geometric nature of generating high-quality ground street videos conditioned on satellite images learning from collections of satellite-ground image pairs. |

|

Yuxin Jin*, Ming Qian*, Jincheng Xiong, Nan Xue, Gui-Song Xia ICME, 2023 arXiv / Code / Paper D-DFFNet considers the physical mechanism of defocus blur and successfully distinguishes homogeneous regions. In addition, we propose a larger benchmark EBD that includes more DOF cases. The results of detection on multiple public test sets look great. |

|

Ming Qian, Congyu Qiao, Jiamin Lin, Zhenyu Guo, Chenghua Li, Cong Leng, Jian Cheng ECCVW, 2020 arXiv / Code BGGAN lets us synthesis bokeh effect images from bokeh-less images end to end. Rank 1st in eccv AIM 2020 challenge . |

|

Design and source code from Jon Barron's website |